The 2026 AI/ML on Kubernetes Stack: vLLM, Kueue, KAITO, KServe, llm-d, Ray

The complete 2026 AI/ML on Kubernetes stack guide - vLLM for LLM inference, Kueue for GPU scheduling, KAITO on AKS, KServe for model serving, llm-d for distributed inference, Ray for training, NVIDIA GPU Operator. Architecture patterns and production-ready deployment.

AI/ML on Kubernetes crossed the production-default line in 2025-2026. What was a specialist configuration 18 months ago is now the expected deployment pattern for any organization running ML at scale. The stack consolidated around a specific set of CNCF and vendor-backed tools, with a new generation of components (KAITO, llm-d) addressing the most demanding 2026 workloads.

This guide maps the complete 2026 AI/ML on Kubernetes stack - the tools that production ML teams actually run, what each does, and how they fit together. Coverage spans vLLM (LLM inference), Kueue (GPU scheduling), KAITO (AKS operator), KServe (model serving), llm-d (distributed inference), Ray (training), MLflow (tracking), and NVIDIA GPU Operator (GPU management).

Why AI/ML on Kubernetes Won

Three-year-ago debates about whether Kubernetes was the right substrate for ML are settled. The 2026 consensus answer is yes, because:

- Multi-tenant GPU sharing - Kueue + MIG + time-slicing enable efficient GPU utilization across teams in ways cloud provider managed services struggle with

- Portability - the same stack runs on AWS EKS, Azure AKS, GCP GKE, Oracle OKE, on-premises, and sovereign clouds (Core42, Stargate UAE)

- Ecosystem maturity - CNCF graduated KServe, Kueue, and adjacent projects; tooling is no longer bleeding-edge

- Cost discipline - node-level cost optimization via Karpenter + workload rightsizing beats many managed ML services at scale

- Regulatory fit - data residency, multi-cloud portability, and audit trails map cleanly to CBUAE AI Guidance, EU AI Act, and FDA SaMD requirements

Organizations still on cloud-provider-native ML platforms (SageMaker, Vertex AI, Azure ML) increasingly use them for development and experimentation while production inference and training moves to Kubernetes-based stacks.

The 2026 Stack Components

Inference: vLLM

vLLM (UC Berkeley Sky Computing Lab, open-source) is the 2026 default LLM inference engine.

Core value: PagedAttention for KV cache management, continuous batching for throughput, OpenAI-compatible API out of the box. Supports Llama, Mixtral, Qwen, DeepSeek, and most open-weight models.

Deployment pattern: typically deployed as a pod (or DaemonSet on GPU nodes) exposing the OpenAI-compatible API. Often wrapped in a KServe InferenceService for multi-model routing.

When to use: any LLM inference workload where throughput matters. For single-model, high-traffic inference, vLLM is the default choice in 2026. See our Running LLMs on Kubernetes with vLLM deep dive for configuration details.

Distributed Inference: llm-d

llm-d (emerging 2025-2026) is a distributed LLM inference framework for Kubernetes, purpose-built for models too large for single-node GPU capacity.

Core value: disaggregated serving (prefill and decode as separate phases), KV cache offloading between nodes, cross-node tensor parallelism, and distributed batching.

When to use: frontier open-weight models (Llama 4 405B, DeepSeek V3, Qwen 3 235B) that exceed single 8-GPU node capacity. For smaller models that fit on single nodes, vLLM is simpler and sufficient.

Production maturity: rapidly evolving in 2026; early adopters at AI-native companies and research institutions. Standard production-grade tool by mid-2027 is the likely trajectory.

Model Serving Framework: KServe

KServe (CNCF graduated) is the Kubernetes-native model serving framework.

Core value: wraps inference engines (vLLM, Triton, TensorFlow Serving, ONNX Runtime, custom containers) with Kubernetes-native features: autoscaling (including scale-to-zero), canary deployments, traffic splitting, multi-model endpoints, and transformers for input/output preprocessing.

When to use: production ML serving beyond single-model single-endpoint. Particularly valuable for teams serving multiple models, running A/B tests on model versions, or needing scale-to-zero for cost-sensitive workloads.

Alternative: for single-model LLM serving, vLLM alone may suffice without KServe. KServe value increases with model diversity and deployment complexity.

GPU Scheduling: Kueue

Kueue (CNCF) is Kubernetes-native job queueing optimized for batch and AI/ML workloads.

Core value: fair-share GPU scheduling across teams, quota enforcement at ClusterQueue and LocalQueue levels, preemption for priority workloads, and gang scheduling for distributed training jobs.

Why it matters: without a queueing layer, GPU clusters suffer head-of-line blocking - one team’s large training job monopolizes GPUs while other teams wait. Kueue enables multi-tenant GPU fairness, pushing utilization from 25-35% baseline to 60-85%.

Production deployment: Kueue runs as a cluster-scoped operator with ClusterQueue resources defining organization-wide capacity pools and LocalQueue resources for per-namespace team quotas.

Training at Scale: Ray + KubeRay

Ray is a distributed computing framework for Python with particular strength in ML training, hyperparameter tuning, and reinforcement learning.

KubeRay is the Kubernetes operator that manages Ray clusters as native Kubernetes resources (RayCluster CRDs).

Core value: distributed training across GPU nodes, Ray Tune for parallel hyperparameter sweeps, Ray RLlib for reinforcement learning, and native integration with PyTorch/TensorFlow/JAX.

When to use: any training workload too large for a single node. Hyperparameter tuning campaigns. Reinforcement learning. Model training that benefits from Ray’s actor model and distributed data parallelism.

Alternative: Kubeflow Training Operator (CNCF) covers similar scope with a different programming model. Kubeflow has broader ecosystem; Ray has better ergonomics for Python-first ML teams. Both are credible in 2026; choice often comes down to existing team familiarity.

Experiment Tracking: MLflow

MLflow (Apache 2.0, originally Databricks) is the de facto standard for ML experiment tracking in 2026.

Core value: log experiments with hyperparameters, metrics, artifacts, and code versions. Model registry for versioned model deployment. Packaging format for portable ML projects.

Kubernetes deployment: MLflow tracking server + Postgres + S3-compatible object storage for artifacts. Typically deployed via Helm chart.

Alternative: Weights & Biases (commercial SaaS), Neptune, Comet for teams wanting hosted alternatives. See aiml.qa’s LLM evaluation framework benchmark for the related evaluation stack.

GPU Management: NVIDIA GPU Operator

NVIDIA GPU Operator (open source, maintained by NVIDIA) automates GPU node management on Kubernetes.

Core value: installs GPU drivers, NVIDIA Container Runtime, Kubernetes device plugin, DCGM Exporter for monitoring, NVIDIA Node Feature Discovery, and MIG manager. Replaces error-prone node-by-node manual configuration.

Features:

- MIG (Multi-Instance GPU) - partition A100/H100 into multiple isolated instances

- Time-slicing - share single GPU across multiple pods (less isolation than MIG but simpler)

- vGPU - virtualized GPUs for certain workloads

- DCGM monitoring - GPU utilization, temperature, throttling, memory metrics

Production necessity: required for any serious GPU workload on Kubernetes. Deployment is typically a single Helm install followed by GPU node tagging.

Azure-Specific: KAITO

KAITO (Kubernetes AI Toolchain Operator) is Microsoft’s open-source operator for managing AI/ML workloads on AKS.

Core value: automates GPU node pool provisioning, pre-packaged model deployments (including large open-weight LLMs), inference serving, and fine-tuning workflows. Azure-opinionated but deployable on other Kubernetes distributions.

When to use: AKS deployments where KAITO’s managed-simplicity model is preferable to assembling vLLM + KServe + Kueue manually. Azure-native organizations with significant AKS investment.

Alternative: for non-Azure deployments, vLLM + KServe + Kueue typically replaces KAITO’s role with more flexibility and vendor-neutrality.

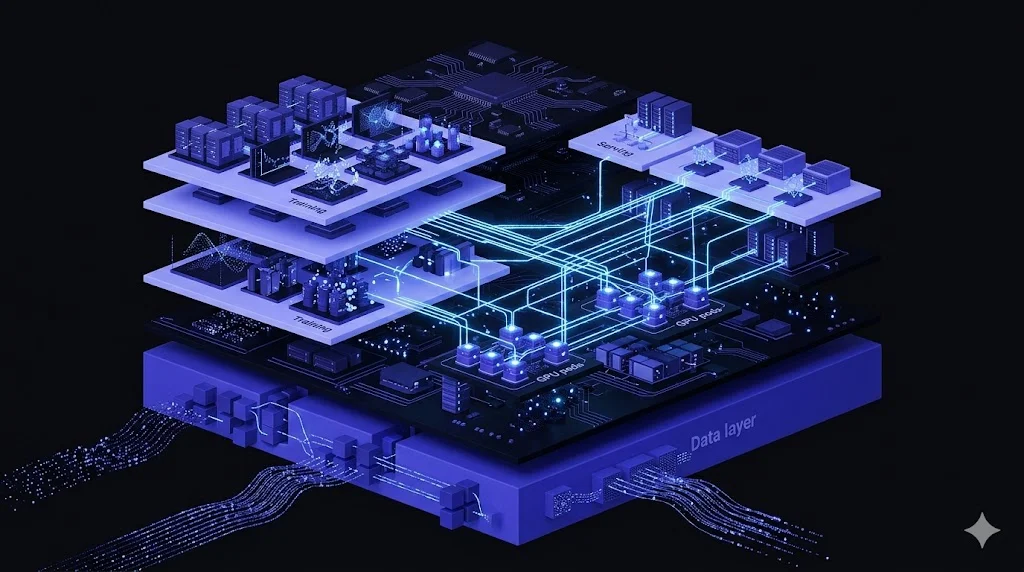

Reference Architecture

A production-grade 2026 AI/ML Kubernetes stack typically includes:

┌─────────────────────────────────────────────────────────┐

│ Application Layer │

│ (Customer-facing apps, internal tools, agent frameworks)│

└─────────────────────────┬───────────────────────────────┘

│

┌─────────────────────────┴───────────────────────────────┐

│ Inference Layer │

│ KServe InferenceService → vLLM (LLM) or Triton (multi) │

│ llm-d for frontier models (70B+) │

└─────────────────────────┬───────────────────────────────┘

│

┌─────────────────────────┴───────────────────────────────┐

│ Scheduling Layer │

│ Kueue (GPU queueing, fair-share, quotas) │

│ Karpenter (node provisioning, spot, bin-packing) │

└─────────────────────────┬───────────────────────────────┘

│

┌─────────────────────────┴───────────────────────────────┐

│ GPU Infrastructure Layer │

│ NVIDIA GPU Operator (drivers, runtime, device plugin) │

│ MIG / Time-slicing / vGPU configuration │

│ DCGM Exporter → Prometheus → Grafana │

└─────────────────────────┬───────────────────────────────┘

│

┌─────────────────────────┴───────────────────────────────┐

│ Training / Experimentation │

│ Ray (KubeRay) or Kubeflow Training Operator │

│ MLflow tracking + model registry │

│ Jupyter notebooks (JupyterHub or Kubeflow) │

└─────────────────────────────────────────────────────────┘

Plus supporting infrastructure that’s non-negotiable:

- Cost tooling: Kubecost / OpenCost + Karpenter (see our cost optimization tools comparison)

- Observability: Prometheus, Grafana, OpenTelemetry, Loki (or Datadog, Dynatrace for commercial)

- Secret management: External Secrets Operator + Vault / AWS Secrets Manager / Azure Key Vault

- GitOps: ArgoCD or Flux (see our GitOps patterns)

- Security: Kubescape, Trivy Operator, Falco for runtime

Deployment Sequence

For teams starting from scratch:

Phase 1 (Weeks 1-2): Foundation

- Install NVIDIA GPU Operator on GPU node pools

- Configure Karpenter for dynamic node provisioning (including GPU node types)

- Deploy DCGM Exporter + Prometheus + Grafana for GPU observability

Phase 2 (Weeks 3-4): Scheduling and Queueing

- Deploy Kueue with ClusterQueue and LocalQueue resources

- Define team quotas and fair-share policies

- Configure MIG or time-slicing for GPU sharing

Phase 3 (Weeks 5-6): Inference Serving

- Deploy vLLM for primary LLM workloads

- Install KServe for multi-model serving and advanced deployment patterns

- Configure autoscaling (KEDA with inference-specific metrics, not just CPU)

Phase 4 (Weeks 7-8): Training Infrastructure

- Deploy KubeRay operator and first Ray cluster

- Deploy MLflow tracking server with Postgres and object storage

- Configure JupyterHub or Kubeflow notebooks for research

Phase 5 (Weeks 9-12): Advanced Optimization

- Tune vLLM PagedAttention parameters for throughput

- Implement progressive model rollouts via KServe canary

- Add cost attribution via Kubecost/OpenCost with GPU allocation

- Consider llm-d for frontier-model deployments exceeding single-node capacity

Shorter timelines are possible - our team typically deploys this stack in 10-14 days for teams with existing Kubernetes fluency.

Choosing Your GPU Strategy

GPU allocation is the biggest architectural decision:

Single-tenant dedicated GPUs: simplest. Each team gets dedicated GPU nodes. Low utilization (often 25-35%) but zero contention. Suits small teams or production-critical workloads.

MIG partitioning (A100, H100, H200): slices physical GPU into 2-7 instances. Hardware-isolated memory and compute. Best for inference workloads where each instance can handle full requests.

Time-slicing: software-level GPU sharing. Multiple pods share a GPU with time-division. Lower isolation than MIG but works on all GPU generations. Good for development workloads.

Fractional GPUs via vGPU: NVIDIA vGPU driver-level partitioning. Requires licensing but offers flexibility.

Dynamic allocation via Kueue + MIG: Kueue admits jobs, MIG partitions match admitted job size, GPU pool dynamically repartitioned as workloads change. Most sophisticated pattern, highest utilization, highest operational complexity.

For most 2026 deployments starting from scratch, MIG partitioning on H100/H200 + Kueue scheduling is the high-leverage default. Time-slicing for development, MIG for production.

Observability Patterns

GPU observability requires metrics beyond standard pod metrics:

DCGM Exporter (part of NVIDIA GPU Operator) emits GPU-specific metrics:

DCGM_FI_DEV_GPU_UTIL- GPU utilization percentageDCGM_FI_DEV_MEM_COPY_UTIL- memory bandwidth utilizationDCGM_FI_DEV_FB_USED- frame buffer (memory) usedDCGM_FI_DEV_GPU_TEMP- GPU temperatureDCGM_FI_DEV_POWER_USAGE- power consumption

vLLM metrics (via Prometheus endpoint):

vllm:time_to_first_token_seconds- TTFT latencyvllm:time_per_output_token_seconds- per-token latencyvllm:generation_tokens_total- throughputvllm:gpu_cache_usage_perc- KV cache utilizationvllm:num_requests_running- active request count

KServe metrics: inference latency, request volume, error rates, autoscaler decisions.

Combine these into Grafana dashboards showing GPU utilization alongside inference latency. Alert on P95 time-to-first-token regressions (often the first signal of upstream model update impact) and GPU throttle events.

UAE and GCC Deployment Considerations

For deployments in AWS me-central-1, Azure UAE North/Central, OCI UAE, or sovereign clouds (Core42, Stargate UAE):

- All tools work identically in UAE regions - vLLM, Kueue, KServe, KAITO, NVIDIA GPU Operator are region-agnostic

- Data residency is enforced at the cluster level via provider policies (AWS SCPs, Azure Policy, OCI tenancy policies)

- CBUAE AI Guidance compliance maps cleanly to this stack - model inventory via MLflow + KServe, drift detection via DCGM + vLLM metrics, HITL via KServe transformer pattern. See CBUAE AI Guidance for UAE Banks

- GPU availability is strongest on AWS me-central-1 (p5/p5e instances), Azure UAE North (ND H100 v5), and Core42 sovereign cloud

For sovereign workloads requiring strict data residency, self-hosted Kubernetes on Core42 or Stargate UAE with this stack is the cleanest path.

Common Production Issues

Real failure modes observed in 2026:

GPU memory fragmentation - long-running vLLM instances accumulate fragmented GPU memory. Mitigation: periodic restarts via rolling deployment, --gpu-memory-utilization tuning.

Cold start latency - first request after scale-to-zero takes 30-120s for model loading. Mitigation: minimum replicas > 0 for latency-critical services, model preloading in sidecar containers.

Kueue preemption failures - aggressive preemption can interrupt long training jobs. Mitigation: checkpointing every N steps, priority class configuration, preemption timeout tuning.

Cost runaway from upstream LLM updates - vLLM configured for one model may behave differently with a new model size. Mitigation: version-pinned model deployments, canary rollouts via KServe traffic splitting.

Mixed-precision accuracy regressions - FP8 or BF16 inference can silently degrade output quality compared to FP16. Mitigation: continuous evaluation via trajectory testing (see aiml.qa’s trajectory testing guide).

How KubernetesGuru Delivers AI/ML K8s Infrastructure

Our AI/ML Kubernetes engagements typically run 8-14 weeks, delivering production-ready stack with:

- Deployed NVIDIA GPU Operator + Karpenter with GPU node pool configuration

- Kueue-based multi-tenant GPU scheduling with team quotas

- vLLM + KServe for LLM inference (or Triton for multi-model scenarios)

- Ray / KubeRay for training workloads

- MLflow for experiment tracking and model registry

- Observability stack with DCGM + Prometheus + Grafana + inference metrics

- Cost attribution via Kubecost/OpenCost with GPU-specific allocation

- Security: Trivy Operator, Falco, Kyverno admission policies

- Documentation and team training

For CBUAE-regulated UAE deployments, engagements explicitly map architecture to AI Guidance principles with inspection-ready documentation.

Book a free 30-minute discovery call to scope your AI/ML Kubernetes engagement.

Related Reading

- Running LLMs on Kubernetes with vLLM - deep dive on vLLM configuration and tuning

- Kubernetes Cost Optimization Tools 2026 - GPU cost is the biggest line item; the tools that manage it

- LLM Evaluation Framework Benchmark (aiml.qa) - the evaluation stack layered on top of the inference stack

- AI Agent Trajectory Testing (genai.qa) - evaluation discipline for multi-step agent deployments

- CBUAE AI Guidance for UAE Banks (mlai.ae) - regulatory framework for UAE financial sector AI deployments

Frequently Asked Questions

What is the 2026 default AI/ML stack on Kubernetes?

The consensus 2026 production stack: vLLM for LLM inference, Kueue for GPU/batch scheduling, KServe for model serving (wraps vLLM, TensorFlow Serving, Triton, etc.), Ray for distributed training and hyperparameter tuning, MLflow for experiment tracking, NVIDIA GPU Operator for driver management, DCGM Exporter for GPU observability. For Microsoft Azure AKS specifically: KAITO (Kubernetes AI Toolchain Operator). Each component is CNCF or major-vendor-backed with production adoption at scale.

What is KAITO and when should I use it?

KAITO (Kubernetes AI Toolchain Operator) is Microsoft's open-source operator for managing AI/ML workloads on Kubernetes, particularly Azure Kubernetes Service (AKS). Automates GPU node pool provisioning, model deployment (including large open-weight LLMs), and inference serving. Strong fit for AKS deployments wanting managed simplicity. For non-Azure deployments, vLLM + KServe + Kueue typically replaces KAITO's role.

vLLM vs KServe - which should I use?

They solve different problems and are often used together. vLLM is a high-throughput LLM inference engine (PagedAttention, continuous batching) - it loads and serves a model. KServe is a model serving framework that wraps vLLM (or Triton, TensorFlow Serving, Seldon Core) with Kubernetes-native deployment, autoscaling, canary deploys, and multi-model endpoints. Production pattern: use vLLM as the inference runtime inside a KServe InferenceService for LLM workloads.

What is llm-d and why does it matter?

llm-d (introduced late 2025) is a distributed LLM inference framework for Kubernetes, purpose-built for multi-GPU, multi-node inference of very large models (70B+ parameters). Addresses the limitations of single-node vLLM when models exceed single-node GPU capacity. Uses disaggregated serving patterns (prefill/decode separation), KV cache offloading, and cross-node tensor parallelism. Emerging category in 2026 for organizations running frontier open-weight models at production scale.

What is Kueue and why is it critical for AI/ML workloads?

Kueue (CNCF) is a Kubernetes-native job queueing and scheduling system designed for batch and AI/ML workloads. Manages multi-tenant GPU sharing, fair-share scheduling across teams, quota enforcement, and preemption. Without Kueue, GPU clusters often suffer from head-of-line blocking (one large training job starves all other teams). With Kueue, GPU utilization typically rises from 25-35% baseline to 60-85% with fair resource distribution.

Do I need the NVIDIA GPU Operator on my cluster?

Yes, for production GPU workloads. The NVIDIA GPU Operator automates GPU driver installation, NVIDIA Container Runtime configuration, device plugin deployment, DCGM Exporter for monitoring, and GPU feature discovery. Replaces manual driver management and node-by-node configuration. Required for MIG (Multi-Instance GPU), time-slicing, and vGPU functionality. Most 2026 managed Kubernetes offerings (EKS, AKS, GKE) support the Operator with a single-command install.

What is Ray and how does it fit with Kubernetes?

Ray is a distributed computing framework for Python, particularly strong for ML training, hyperparameter tuning (Ray Tune), and reinforcement learning. On Kubernetes, the KubeRay operator manages Ray clusters as native Kubernetes resources. Typical use: train large models across multiple GPU nodes, run hyperparameter sweeps, orchestrate distributed reinforcement learning. Complementary to vLLM (inference) - Ray for training, vLLM for serving.

How do I choose between vLLM, Triton, and TensorRT-LLM?

Complementary choices. vLLM is open-source, optimizes throughput via PagedAttention, excellent for general LLM serving. Triton (NVIDIA Inference Server) is a multi-model, multi-framework server - strong when serving diverse model types (LLM + vision + classical ML). TensorRT-LLM (NVIDIA) offers highest performance via GPU-specific optimization but is NVIDIA-only and more complex to deploy. Production pattern: vLLM for LLM-dominated workloads, Triton when model diversity matters, TensorRT-LLM when peak performance justifies operational complexity.

Complementary NomadX Services

Get Expert Kubernetes Help

Talk to a certified Kubernetes expert. Free 30-minute consultation - actionable findings within days.

Talk to an Expert